Gain More Insight With Better Connected Data

Observability is essential for managing complex systems in modern environments such as microservices architectures, cloud-based deployments, and dynamic container orchestration platforms. By having a comprehensive understanding of system behavior and the ability to quickly identify and address issues, organizations can ensure optimal performance, reliability, and user experience.

- Home

- Observability

01

Observability can give you the insights you need to be predictive, rather than just reactive.

Find The Opportunities To Drive Your Business Into The Future

Data tells a story. It tells of what’s happening, it tells of what’s happened, and, when combined, it can tell about what might happen. Observability is generating insights without having to search for them. It’s about surfacing new and interesting connections across data relationships. When you apply that to streaming performance, for example, it may identify where outages or quality degradations may occur…before they happen.

But for observability to exist, all the data must exist in the same place. That requires a different approach to collecting and processing the information you collect from your endpoints. First, you need to do the collecting yourself. That will allow you to process the data in a way that is meaningful to your business. Think measurement intermediation. Second, you need to enrich the data at the time of collection: linking external data sources to what you collect within your endpoints.

Together, these two changes will deliver a datastream to your data lake that is not only full of insight but also ready to be visualized without any additional post-processing. And that will help carry your business into the future as you discover new opportunities to reduce subscriber churn, optimize your workflow to improve viewer satisfaction, and even new ways to generate revenue.

But generating observability isn’t without it’s challenges:

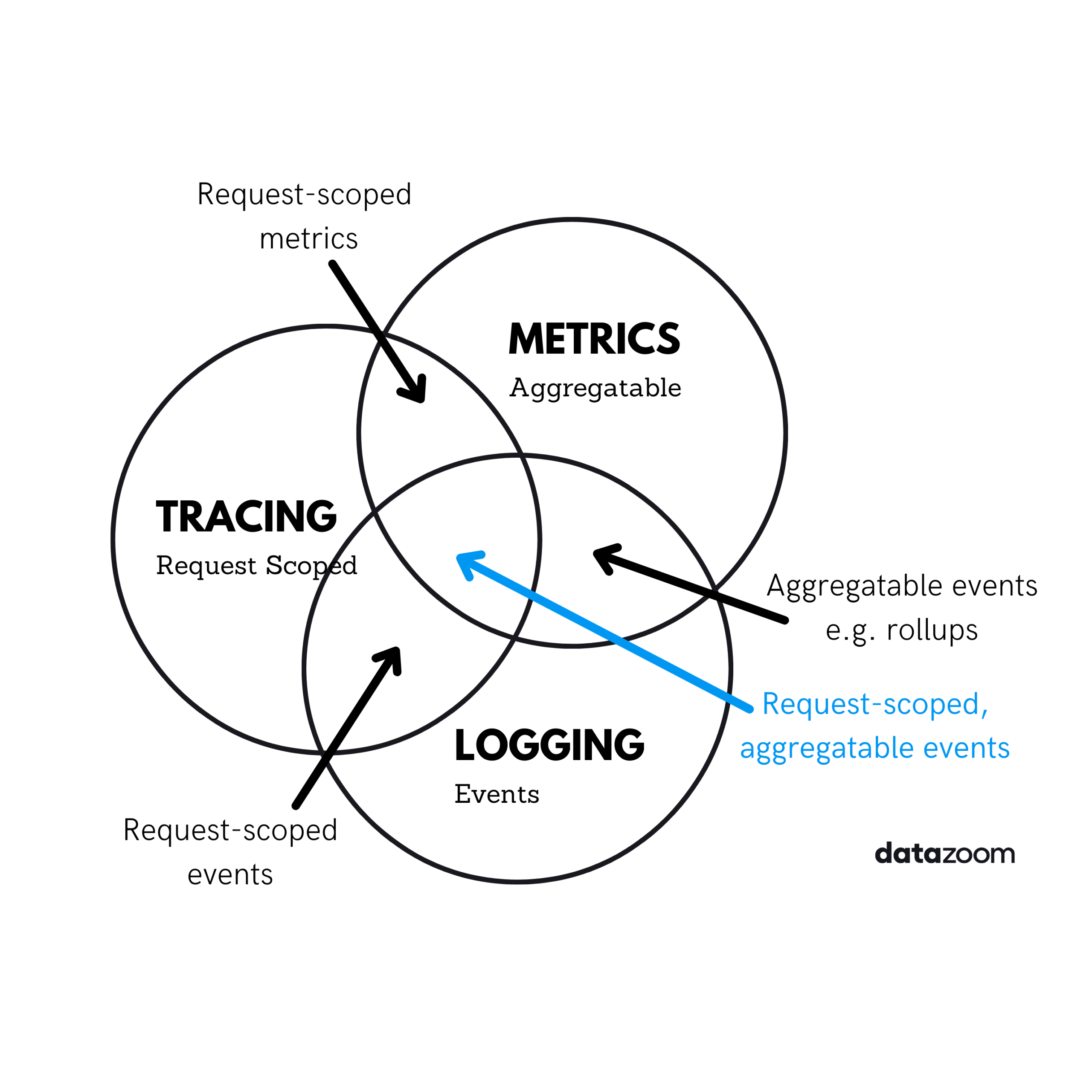

- Modern systems generate vast amounts of data from various sources, including metrics, logs, and traces

- Real time alerts require real time data delivery

- Instrumenting applications to collect the right data and at what frequency without impacting performance

- Correlating data, tracing requests across different services and components to identify root causes in distributed systems

The Datazoom platform can help you overcome those challenges by collecting, processing, and delivering your data to a single location in a cost-effective manner.

02

There is clear business value in delivering data to tools that can be used for observability.

What Does Observability Mean For Your Business?

Storing the data collected from your video player or other end points directly in storage controlled by you provides a number of key advantages and benefits to your business:

Make Faster Business Decisions

When your data isn’t spread out all over the place, because you are delivering enriched data streams to your data lake, you can start using your data as soon as it hits your tools. That means you’ll be able to make business decisions much faster.

Increase Your Reporting Accuracy

Reports drive the business. Whether you are visualizing ad inventory forecasts, content licensing spend, or subscriber churn, when the data is all in one place, standardized and normalized before it hits the data lake, you can rest assured that those reports will more accurately represent the data.

Identify New Business Opportunities

With all that data in one place, observability will bubble up in interesting and powerful ways. Yes, it will help you see the patterns in performance and availability, but it will also show you ways in which to better engage with your viewers, which content you should recommend to which users, and identify how you might shape your business to improve the experience for future subscribers.

03

Read about how Datazoom enables businesses to experience cost-effective observability.

Read The Whitepaper On How Datazoom Enables Companies to Enable Observability

Interested in understanding how you can use Datazoom to enable observability in your streaming business? Just fill out the form below to get an email link to download the whitepaper.

"*" indicates required fields